Better Images of AI: An Important Discussion for Society

This blog is written by Elo Esalomi, a Year 13 student from London, who is writing about her experiences of the Better Images of AI workshop during London Data Week in Summer 2023, supported by LOTI, where participants had the chance to learn about how to visually represent AI in a responsible and accurate way.

Around 50 people joined the Better Images of AI workshop at the Science Gallery in July 2023 as part of London Data Week.

Artificial intelligence (AI) has become an important talking point in our society due to the spread of generative AI tools (such as ChatGPT or Bing) that we have witnessed over the last few months. This is why the Science Gallery’s newest exhibition ‘AI: Who’s Looking After Me?’ seemed especially timely as it explored various examples of how AI is already being used in our daily lives and its potential usage in society as these technologies improve.

After attending this exhibition, I was lucky enough to be invited to attend the Better Images in AI Workshop, hosted at the Science Gallery during the first ever London Data Week, a week of events to learn, create, discuss, and explore how to use data to shape our city for the better. Supported by the London Office of Technology and Innovation (LOTI), the aim of the Better Images of AI Workshop was to create images that represented our reactions and views on various aspects of AI. This seemed both therapeutic and informative, and I hoped to be able to delve deeper into the topics that were introduced in the exhibition.

The first thing I noticed when I arrived at the Gallery was the diversity of people who had decided to attend this workshop on a rainy Tuesday. Students, tech experts, artists and those simply interested in AI and its social impacts had congregated outside the venue; a range of races, ages, and genders converging around this increasingly important topic. This gave me a good feeling coming into the workshop, as I knew that there would be a great diversity of perspectives, which would enable more thought-provoking discussions.

Upon entry into where the workshop was to be held, audience members were sat around tables filled with pens, papers of different sizes, and tablets to aid us with our drawings. At 3pm, the workshop began. First, we heard from Tania Duarte, who spoke of the harmful practice of “anthropomorphising” AI systems in the media (that’s when AI systems are made to look like humans). This was followed by an enlightening talk led by Dr Rob Elliot Smith about how some generative AI tools (text-to-image ones such as MidJourney) operate. One example that particularly stuck with me was the usage of the term ‘hallucinations’ to describe when large language models (LLMs – the backbone of many generative AI tools) generate false information. The term ‘hallucinations’ evokes that LLMs have ‘human-like’ brains and are sentient, which is false. Anthropomorphising AI tools reduces transparency for the general public and increases fears of a potential ‘Terminator’ uprising, a dystopian future that is completely unfounded. This example alone shows the importance of framing and creating images of AI which are not stereotypical, but emphasise the role people play in the design, development, deployment and usage of AI systems.

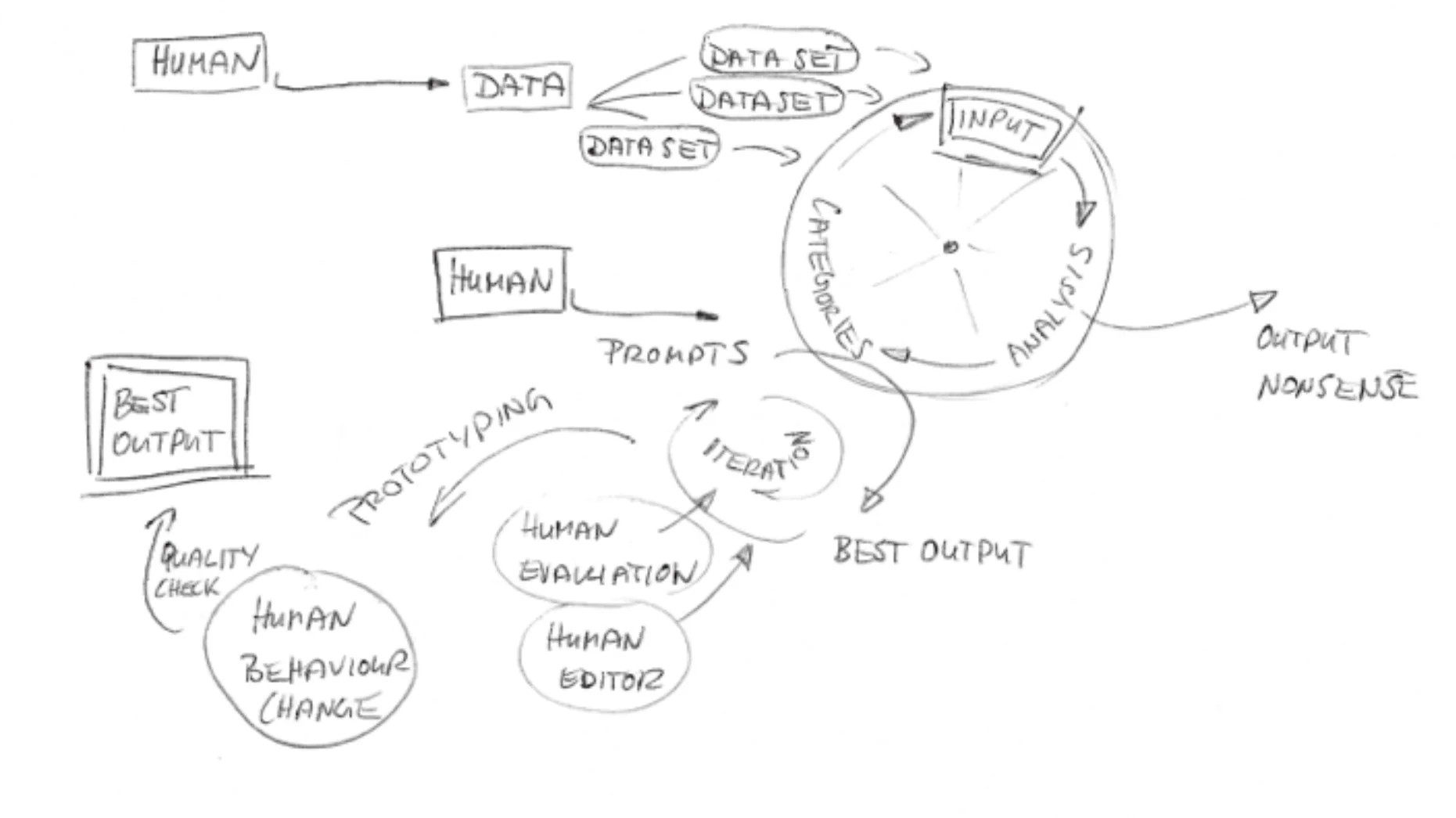

– Marie Janine Murann (during workshop): Abstract cogwheels suggesting that AI tools can be quickly developed to output nonsense but, with adequate human oversight and input, AI tools can be iteratively improved to produce the best outputs they can.

We were then treated to an interesting discussion with Tamsin Rooney, a physicist-turned-machine-learning (ML) researcher, and Yasmine Boudiaf, a creative technologist and author who introduced our first drawing challenge. They outlined the process of creating LLMs and the factors which need to be considered during their production, such as clean and diverse datasets, cost, and environmental factors. This was followed by the first of two 10-minute drawing sessions. Based on the information that had been provided so far, we drew what came to mind with the pens and papers on the table. Several people then presented their sketches and thought processes, and I found it fascinating how varied peoples’ interpretations were after being provided with the same information.

The second drawing challenge was led by Emily Rand, an illustrator and author, and Sam Nutt, a researcher and Data Ethicist at LOTI, and covered the uses of LLMs within public services within councils. Sam detailed how AI could be used for admin purposes and sentiment analysis. However, questions of bias were raised. For example, if English were your second language and you were not able to effectively convey your stress or anxieties through an email, would an automated sentiment analysis algorithm adequately prioritise your request? Emily’s creative prompts and Sam’s responses served as an excellent method for exploring the impacts of generative AI on public services and the role they might effectively play.

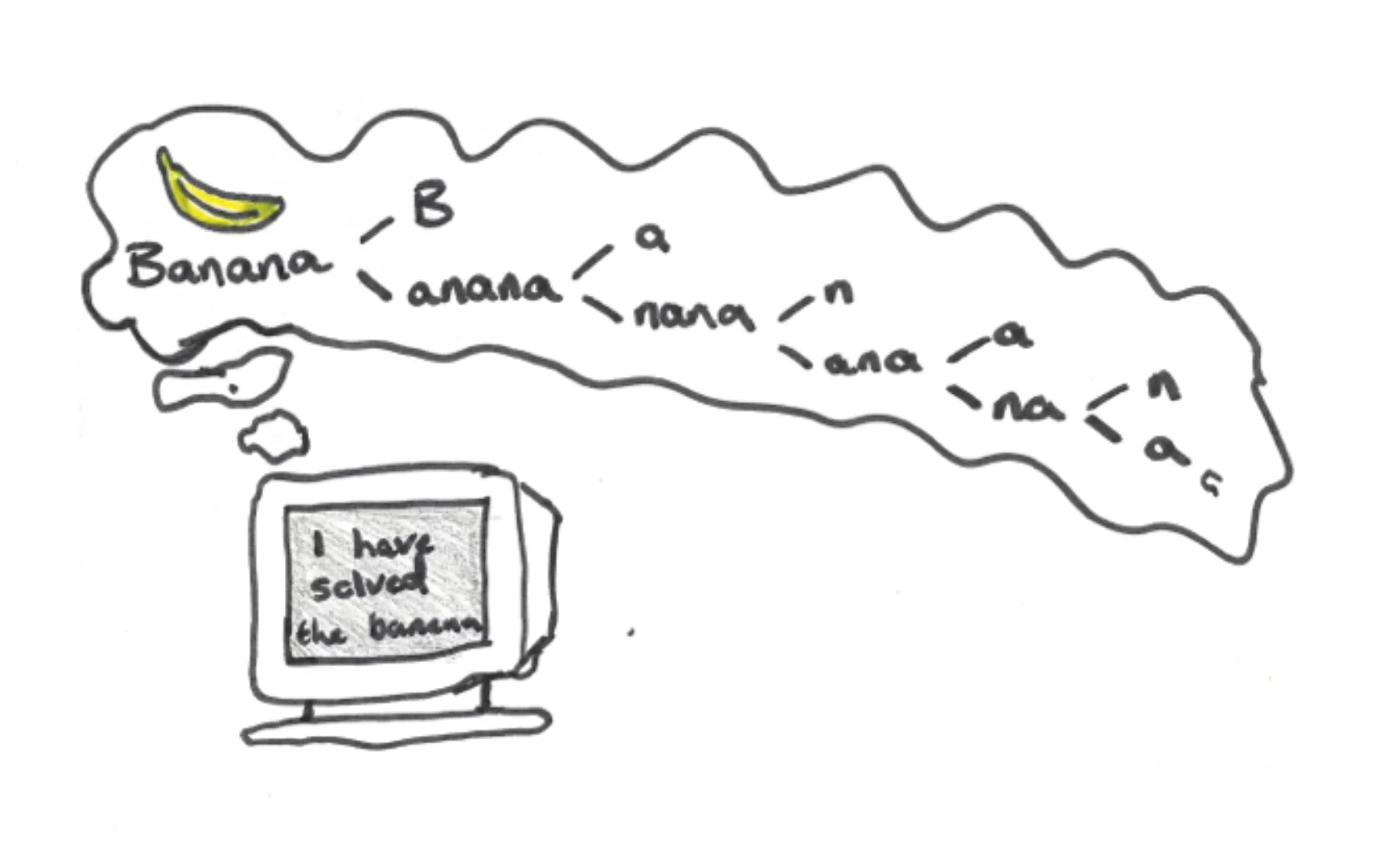

– Participant (during workshop): A computer claims to have “solved the banana” after listing the letters that spell “banana” – whilst a seemingly analytical process has been followed, the computer isn’t providing much insight nor solving any real problem.

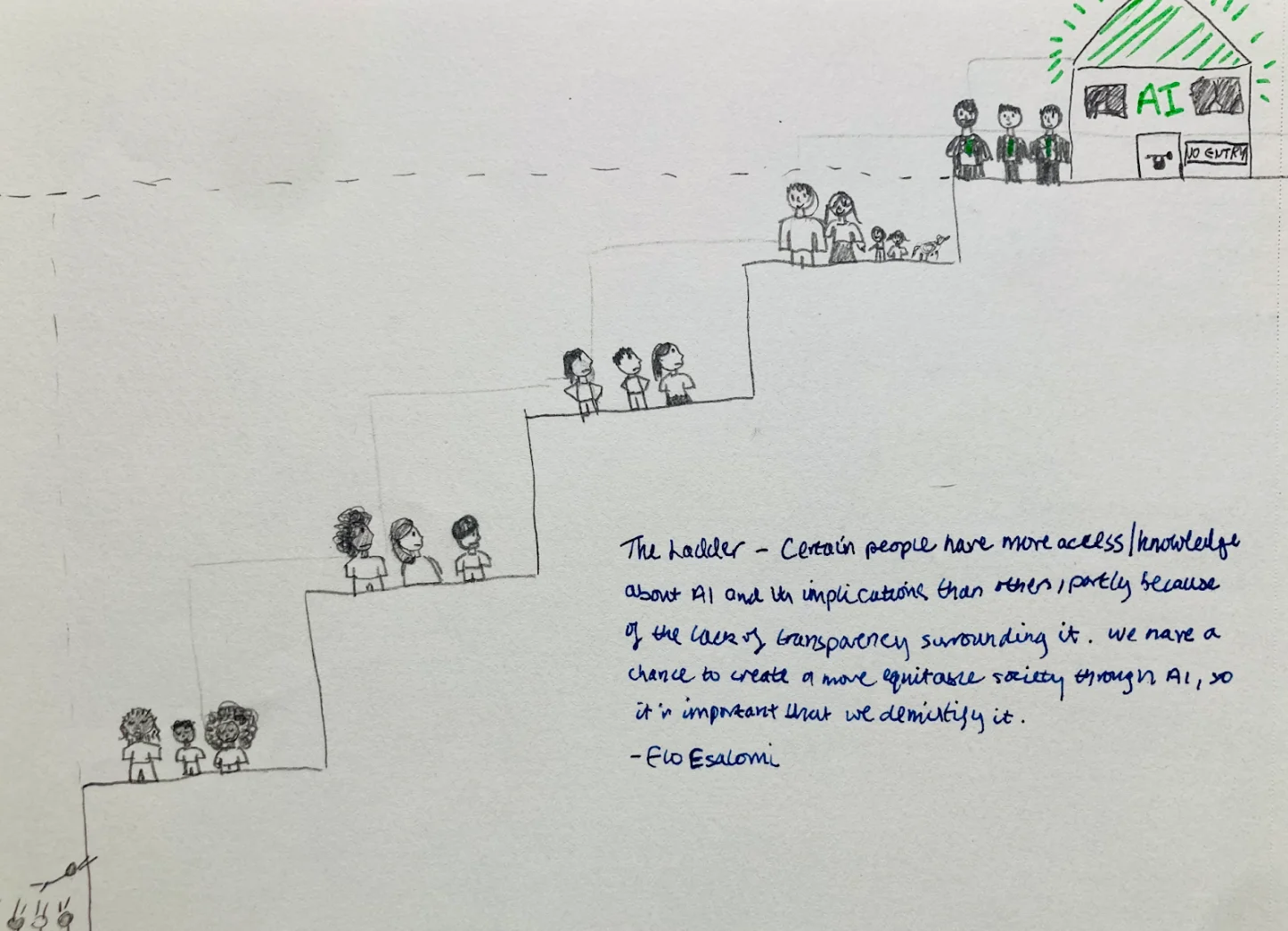

During the second 10-minute drawing session, I decided to illustrate the biases and obstacles that minority groups face when trying to access the benefits of AI and the fundamental knowledge that comes with it. I visualised this idea through a staircase with diverse groups of people attempting to reach the elusive pinnacle of AI and knowledge, which was guarded by so-called experts. With the staircase, I attempted to convey the need for demystifying preconceived notions about AI so that we can create an equitable and transparent society, instead of gatekeeping knowledge for the privileged. I then presented this drawing to the entire group, along with three other attendees, who each had represented their own unique takes on the types of bias and inequalities that arise from AI.

The image created by Elo Esalomi during the workshop.

By the end of this two-hour workshop, I had learned so many new things as a student who, though deeply interested in AI, had not previously had the opportunity to engage with some of the more difficult and philosophical concepts that relate to AI. Finally, it was key for the event’s impact that it was run so interactively. Not only that, but some of the drawings will shortly be launched onto the Better Images of AI gallery – thanks to LOTI’s support!

Elo Esalomi