Putting in place the enabling conditions for AI

Recently, I’ve been visiting the leadership teams of quite a number of London boroughs who are all asking the question: “How do we get the most out of AI?”

They’ve heard about the promise of greater efficiencies, cost savings, the ability to personalise, speed up and offer interactions at scale. Yet many feel unsure what it takes to achieve those results.

In this blog, I set out some of the key messages I’m sharing in response.

The AI Illusion

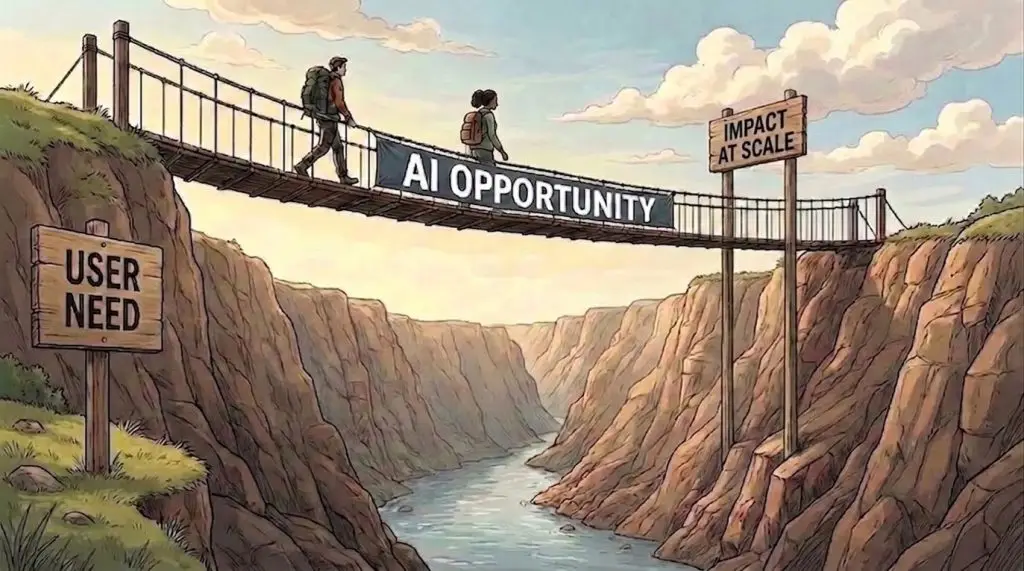

At LOTI, we often think about achieving outcomes as like going on a journey. We set a destination (or a “preferred future”) and then ask: what does it take to get there?

In the case of AI, we might think about this like traversing a river. On the right-hand bank we have the desired goal of achieving a positive impact at scale. On the left, we have unmet user needs. The question leaders are asking is: How do we traverse the river using AI?

The problem is this image is too simplistic. Many organisations seem to be falling for the same mistake we’ve been trying to undo with digital government for the past 15 years: thinking that this is just about selecting and rolling out the right tool.

If only it were that simple. Here’s the truth: you cannot procure your way out of hard organisational change.

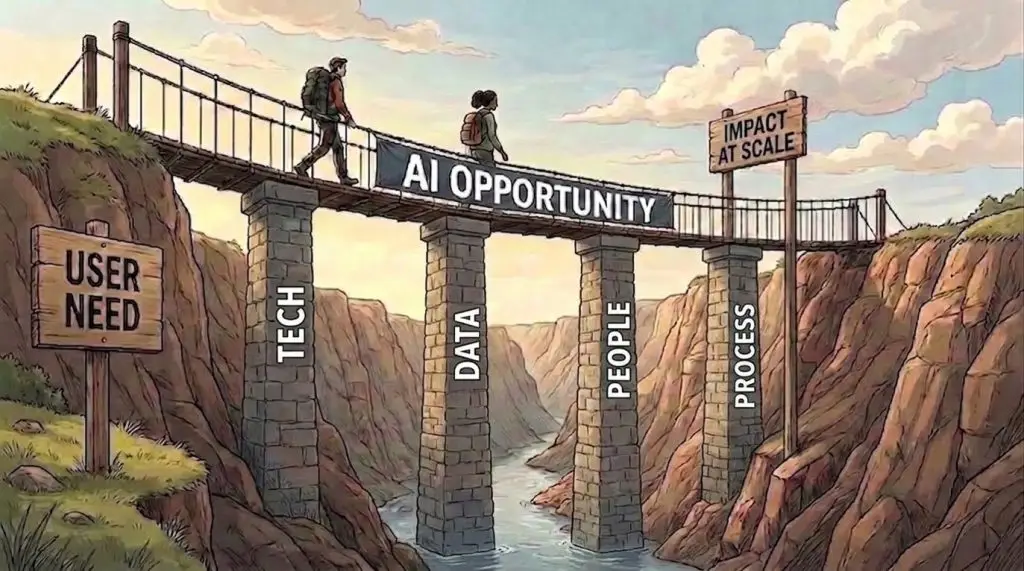

If we want a better picture, we need to acknowledge that the bridge of AI opportunity needs to be supported by at least four pillars, representing tech, data, people and process.

Exploring the pillars

Let’s start with tech.

There may be a few AI applications that stand alone. But the vast majority will need to interface with councils’ existing systems. If those systems are big legacy behemoths that lack APIs (let alone Model Context Protocol functionality) to exchange data and enable shared functionality then we’re likely to struggle.

If our technology procurement processes have been geared towards procuring massive IT systems, we’ll find it hard to even test new tools that might only cost tens of pounds. If our governance favours multi-year contracts, we’ll rapidly accumulate technical debt when AI tools are completely superseded by a new product every five months.

And we need a serious conversation about digital sovereignty. If we’re going all-in on new AI tools, do we have the ability to make intelligent assessments around which suppliers align with our values, our need for cyber resilience and data protections? Furthermore, given the massive volatility of AI investments, are we clear-sighted on what level of risk we’re exposing ourselves to if a major AI supplier collapses tomorrow?

Councils also need to think about data.

If our data is poor, AI will help us make faster bad decisions.

If we use resident data in AI in ways that spook our residents, we’ll set the whole positive use of AI back by years.

Councils need to think about people.

If we don’t involve residents, staff and other service users in the design of AI-enabled interventions, what are the chances they’ll positively engage with them? The history of public sector digital transformation is littered with cases of people voting with their feet and opting out of using the tools we designed for rather than with them.

Are we bringing multi-disciplinary teams to bear on designing AI-enabled interventions? Councils can’t delegate public sector reform to their IT department. We need colleagues from user research, service design, policy, behavioural science and so on working together.

Are our AI initiatives leaving our staff augmented or diminished? Sure, experienced staff using AI tools right now might gain some productivity by using a tool that creates notes for them or drafts a report. But three years from now, might we have systematically undermined the very skillset that enables those staff to critically assess and edit those documents? Doing so risks building up a skills debt.

And lastly, we need to be cautious about the false economy of making human things transactional. Just because we can do it with a chatbot, are we clear on where that’s a good thing; and where people need to be seen, heard and validated by a real human being? Or as my LOTI colleague Sarbjit Bakhshi puts it: “Do we have humans in meaningful places?”

And we need to think about process.

We cannot simply bolt on new tech to the same processes and ways of working we’ve had for a decade and expect profound change. We need to fundamentally reinvent some of our service models for a world in which AI is part of a much larger toolkit. Adding AI to existing processes runs the risk of simply moving the demand bottleneck to another part of the service.

We also need to think about processes as we trial AI. According to Forbes, 95% of AI initiatives never get beyond the pilot. So here’s my question to councils: are you any good at experimenting? In local government, our behaviours and rules sometimes make it incredibly hard.

For example, we often fall prey to the fantasy business case. In order to start something new, we ask teams to first state the expected ROI (Return on Investment) and benefits. That’s fine in a completely controlled environment where all the parameters are known. But that’s not the world we operate in for most local government contexts. The constantly changing nature of AI creates another uncertainty. Asking for a traditional business case before we begin a trial is asking our teams to hallucinate with false confidence into an unknowable future.

Instead, councils need to try new tools and methods in micro-increments. I sometimes describe this with a sailing ship analogy. The job of leadership teams is to describe a beautiful island destination they want to reach. They can then judge their teams by their ability to act like a sailing ship that is constantly course correcting to reach that destination.

I’ve also had some council leadership teams tell me: “We only have time for one innovation this year. It must deliver cashable savings and it must work”. That sets an impossibly high bar. The nature of innovation is trying something new. The whole point is that we don’t have certainty that it will work. If you must measure ROI, the smarter strategy is to spread your bets and try multiple different things at the same time. Measure ROI on a portfolio of experiments, not just one.

Which leads to a final point: if we ever want to get beyond pilots we need to get better at evaluation. If we don’t start with a good baseline, and if we don’t measure the change we’re expecting, we won’t ever have compelling evidence to make the case for further investments needed to scale.

Conclusion

Councils can and should be asking themselves what AI can do for them. But if they want to realise those opportunities, it needs to be a full organisation endeavour. Like all technologies, AI doesn’t exist in a vacuum. Using it well requires having the enabling organisational conditions in place.

If that sounds like harder work, it is. But I think it’s actually an empowering message for council leadership teams: the future of local government isn’t just about IT, but the wholesale change of our sector to operate in a world where AI is another tool in our toolkit. And that will require all the talents.

If you’re a LOTI member and would like an AI session for your leadership team, please get in touch.

Eddie Copeland